Master Natural Language Processing: A Complete Guide

This comprehensive guide explores everything you need to know about Natural Language Processing. We will break down how the technology works, explore its most powerful real-world applications, and compare the top tools available for developers. You will also learn how to avoid common pitfalls and implement expert strategies to successfully integrate these systems into your own business operations.

Have you ever wondered how your smartphone understands your voice commands or how email platforms automatically filter out spam? The secret behind these everyday conveniences is Natural Language Processing, a fascinating branch of artificial intelligence that bridges the gap between human communication and computer understanding.

Understanding Natural Language Processing

Natural Language Processing gives machines the ability to read, understand, and derive meaning from human languages. It is not just about translating words from one language to another; it is about grasping the context, intent, and sentiment behind the text. By combining computational linguistics with statistical, machine learning, and deep learning models, computers can process human language in the form of text or voice data to fully comprehend its full meaning.

For decades, computers only understood structured data like spreadsheets and databases. Humans, however, communicate in unstructured formats full of slang, idioms, sarcasm, and complex grammar. Natural Language Processing breaks down these massive volumes of unstructured text, organizing them into formats that machines can compute, analyze, and act upon. This capability forms the backbone of many modern artificial intelligence solutions used by global enterprises.

How Does It Actually Work?

To understand human speech or text, a computer must go through a series of complex steps. First, the system ingests the raw data. This data then undergoes preprocessing, which involves cleaning and standardizing the text. Common preprocessing steps include tokenization (breaking text into smaller pieces called tokens), stop word removal (filtering out common words like “the” or “is”), and stemming or lemmatization (reducing words to their root forms).

Once the text is cleaned, the Natural Language Processing system applies various algorithms to extract meaning. Rule-based systems rely on manually crafted grammatical rules, while modern statistical and machine learning systems learn from vast amounts of training data. Deep learning models, particularly neural networks, have revolutionized this phase by automatically detecting intricate patterns in language without requiring explicit human programming.

Key Components of Language Analysis

When you dive deeper into Natural Language Processing, you will find that analyzing language requires breaking it down into distinct linguistic levels. Each level tackles a different aspect of human communication.

Syntax and Grammar

Syntax refers to the arrangement of words in a sentence to make grammatical sense. Natural Language Processing algorithms use syntax to assess meaning based on grammatical rules. Key techniques in syntax analysis include parsing, which involves diagramming a sentence to understand its grammatical structure, and part-of-speech tagging, which identifies whether a word is a noun, verb, adjective, or another part of speech.

If a system cannot understand syntax, it cannot differentiate between “The dog bit the man” and “The man bit the dog.” Proper syntactic analysis ensures the machine processes the precise structure of the information provided.

Semantics and Pragmatics

While syntax deals with structure, semantics focuses on the actual meaning of the words. Semantic analysis helps machines understand the exact meaning of a text in a given context. For example, word sense disambiguation helps the system determine whether the word “bark” refers to a tree’s outer layer or the sound a dog makes.

Pragmatics takes this a step further by looking at the broader context of the conversation. It involves understanding the intended effect of the language. When someone says, “Can you pass the salt?” pragmatics tells the machine that this is a request for action, not a yes-or-no question about physical capability. Mastering pragmatics is essential for developing highly responsive customer experience platforms.

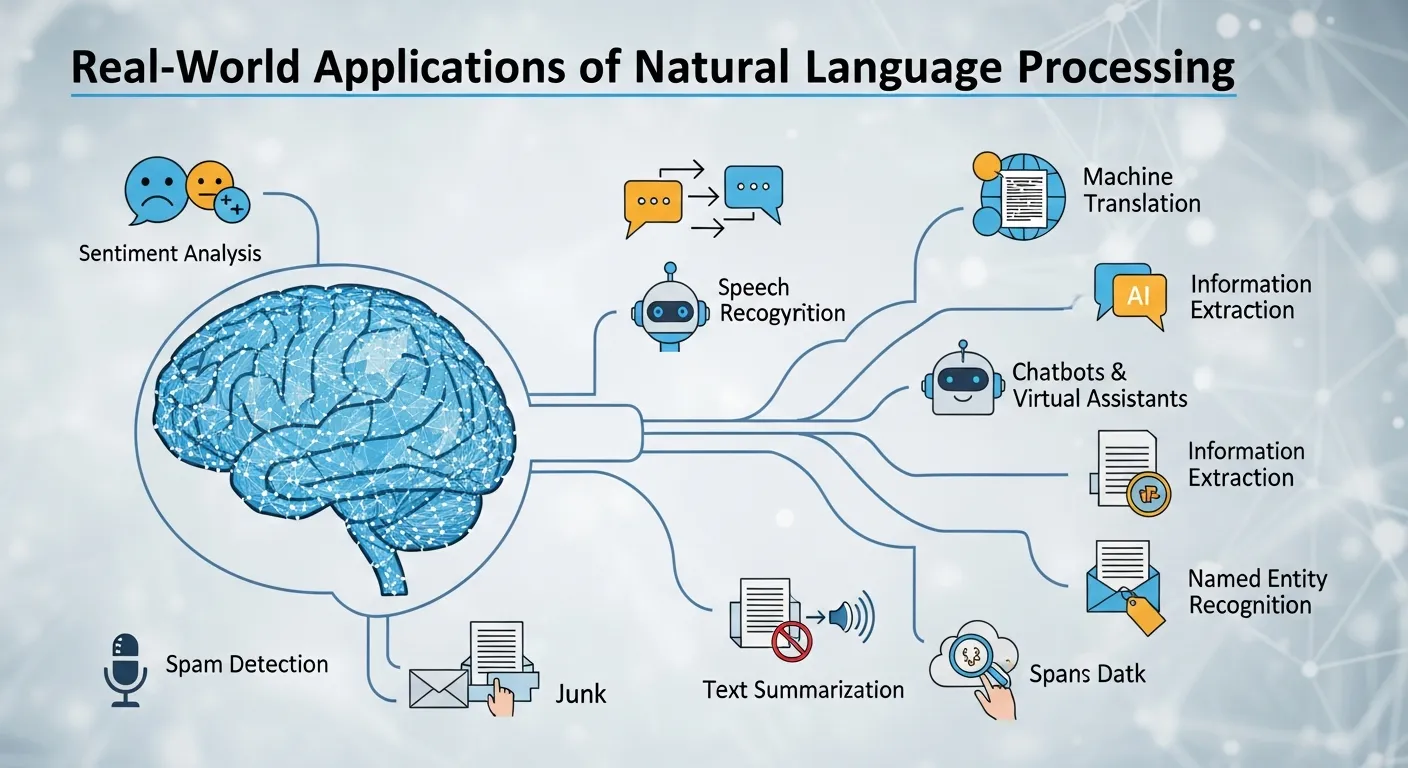

Real-World Applications of Natural Language Processing

The theoretical concepts of language processing have rapidly evolved into practical tools that drive massive business value. Natural Language Processing is everywhere, subtly powering the software and services we rely on daily.

Sentiment Analysis

Brands need to know how customers feel about their products. Reading thousands of reviews or social media posts manually is impossible. Natural Language Processing automates this through sentiment analysis, evaluating text to determine if the underlying emotion is positive, negative, or neutral. Companies use these insights to monitor brand reputation, manage public relations crises, and improve product development.

Chatbots and Virtual Assistants

Virtual assistants like Siri, Alexa, and customer support chatbots rely heavily on Natural Language Processing to interact with users. These systems use speech recognition to convert spoken words into text, natural language understanding to grasp the user’s intent, and natural language generation to formulate an appropriate, human-sounding response. This seamless cycle allows businesses to offer 24/7 support without human intervention.

Machine Translation

Translating text from one language to another is one of the oldest challenges in computer science. Modern Natural Language Processing has vastly improved machine translation tools like Google Translate. By using deep learning and neural machine translation, these systems now consider the context of entire sentences rather than translating word by word, resulting in much more accurate and natural-sounding translations.

Text Summarization

In the age of information overload, professionals struggle to read every document, report, or article that crosses their desks. Text summarization uses Natural Language Processing to distill long documents into concise, accurate summaries. Extractive summarization pulls the most important sentences directly from the text, while abstractive summarization generates new sentences that capture the core concepts, much like a human would.

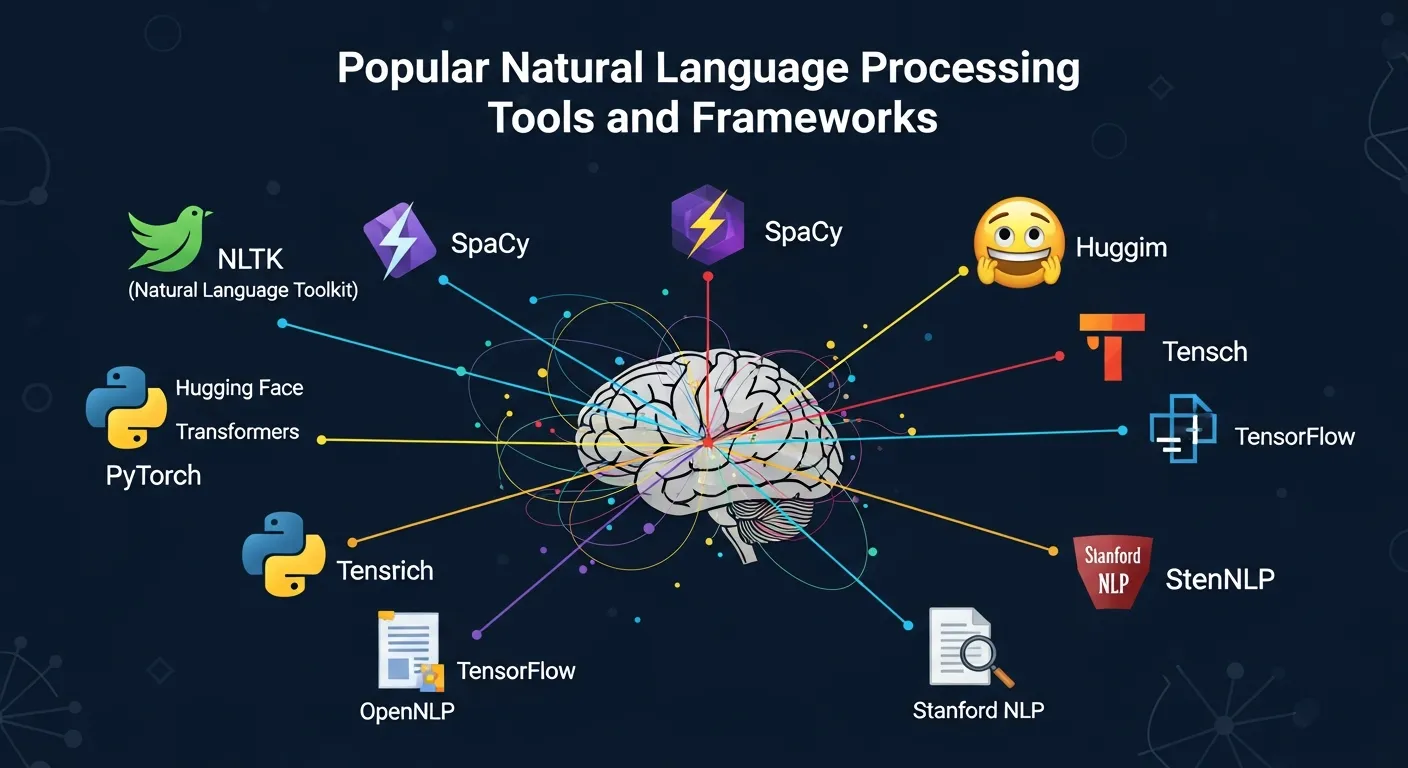

Popular Natural Language Processing Tools and Frameworks

Developers and data scientists have access to a wealth of powerful tools to build intelligent language applications. Choosing the right framework depends on your specific use case, technical expertise, and project scale.

|

Tool / Framework |

Primary Use Case |

Key Features |

Best For |

|---|---|---|---|

|

NLTK (Natural Language Toolkit) |

Education and prototyping |

Extensive library of text processing algorithms |

Beginners and academic researchers |

|

spaCy |

Production-level language processing |

High speed, pre-trained statistical models |

Enterprise applications requiring scale |

|

Hugging Face |

Advanced deep learning tasks |

Transformers, state-of-the-art accuracy |

Complex projects needing context awareness |

|

Gensim |

Topic modeling and document similarity |

Memory independence, scalable algorithms |

Uncovering hidden structures in large text corpora |

|

Stanford CoreNLP |

Linguistic analysis |

Robust part-of-speech tagging and parsing |

High-accuracy linguistic research |

If you want to explore the academic foundations of these tools, organizations like the Stanford NLP Group publish extensive research that continues to push the boundaries of what these frameworks can achieve.

Implementing Natural Language Processing in Your Business

Adopting these technologies can transform how your business operates, but it requires a structured approach. Simply throwing data at an algorithm will not yield meaningful results.

Step-by-Step Guidance

- Define the Business Problem: Do not adopt AI just for the sake of it. Identify a specific problem. Are you trying to reduce customer support ticket times? Do you need to extract data from thousands of legal contracts? Clearly defining the goal dictates the type of Natural Language Processing solution you need.

- Gather and Clean Your Data: Algorithms are only as good as the data they consume. Collect relevant text data from your CRM, emails, or social media channels. Ensure you allocate significant time to clean this data, removing duplicates, formatting issues, and irrelevant noise.

- Choose the Right Approach: Decide whether your problem requires a simple rule-based approach or a complex deep learning model. For many businesses, utilizing pre-trained models via APIs from providers like OpenAI or Google Cloud is much more efficient than building a neural network from scratch.

- Develop and Train the Model: If you are building a custom solution, split your data into training and testing sets. Train your Natural Language Processing model to recognize the specific terminology and patterns relevant to your industry.

- Evaluate and Iterate: Test the model’s accuracy. Language is dynamic, and user behavior changes. Continuously monitor your system’s performance and retrain it with new data to prevent accuracy degradation over time.

Implementing these steps successfully requires a strong foundation in data management strategies to ensure your models are fed with high-quality, secure information.

Common Mistakes to Avoid in Natural Language Processing Projects

Even experienced engineering teams can stumble when deploying language models. Avoiding these common errors will save your organization time, money, and frustration.

Ignoring Domain-Specific Language

A model trained on general Wikipedia articles will perform poorly if you ask it to analyze complex medical records or highly technical legal documents. Every industry has its own jargon, acronyms, and context. Failing to fine-tune your Natural Language Processing models on domain-specific data will result in severe inaccuracies and misinterpretations.

Neglecting Data Quality and Bias

Language models learn human biases present in their training data. If your historical data contains biased language regarding gender, race, or demographics, your model will replicate and amplify those biases. Always audit your training datasets for fairness and representation. Furthermore, poor quality data full of typos and formatting errors will directly degrade the performance of any Natural Language Processing algorithm.

Overcomplicating the Solution

Not every problem requires a massive, multi-billion-parameter neural network. If you only need to search documents for specific keywords or classify straightforward support tickets, simpler rule-based systems or traditional machine learning algorithms might be faster, cheaper, and easier to maintain. Match the complexity of the technology to the complexity of the problem.

Expert Tips and Pro Strategies

To maximize the return on your investment in language technology, consider these advanced strategies utilized by industry leaders.

- Leverage Transfer Learning: Instead of training a model from scratch—which requires massive amounts of data and computing power—use transfer learning. Start with a pre-trained language model like BERT or GPT, and fine-tune it with a smaller dataset specific to your business. This dramatically reduces development time.

- Prioritize Human-in-the-Loop Systems: Completely automating language tasks is risky, especially in critical areas like healthcare or finance. Implement a human-in-the-loop strategy where the Natural Language Processing system handles the bulk of the work, but flags low-confidence predictions for human review. This ensures high efficiency without sacrificing accuracy.

- Focus on Multilingual Capabilities: If your business operates globally, do not restrict your Natural Language Processing efforts to English. Invest in models capable of cross-lingual understanding. This allows you to deploy chatbots and sentiment analysis tools across different regions simultaneously, maintaining a consistent customer experience worldwide.

- Keep Up with State-of-the-Art Research: The field of artificial intelligence moves at an astonishing pace. Regularly review documentation and updates from authoritative platforms like the NLTK Documentation and leading research journals. Adopting new architectures as they emerge can provide a significant competitive advantage.

- Optimize for Latency: Deep learning models can be slow. If you are deploying Natural Language Processing for real-time applications like voice assistants, optimize your models for speed. Techniques like model quantization and pruning reduce the size of the neural network, allowing it to process language much faster without a major drop in accuracy.

The Future of Human-Computer Interaction

The trajectory of Natural Language Processing points toward even more seamless integration into our daily lives. We are moving beyond simple text classification into the realm of true conversational AI. Future models will possess enhanced emotional intelligence, detecting not just what a person is saying, but the subtle emotional state behind the words.

Furthermore, the integration of Natural Language Processing with computer vision—allowing machines to describe images in natural language or generate images from text prompts—is opening entirely new frontiers for creative industries and accessibility tools. As the technology becomes more accessible and requires less specialized coding knowledge, businesses of all sizes will be able to harness the power of language analysis.

If you are looking to stay ahead of the curve, ensure your technical teams are well-versed in the latest cloud computing frameworks, as these platforms provide the necessary infrastructure to scale massive language models efficiently.

Conclusion

Natural Language Processing has completely revolutionized how businesses interact with data, customers, and technology. By understanding the core mechanics, leveraging the right frameworks, and avoiding common implementation mistakes, you can unlock incredible value hidden within your unstructured text data. Whether you want to automate customer service, gauge public sentiment, or streamline document analysis, mastering Natural Language Processing is essential for the future of your business. Start assessing your data today, choose a pilot project, and take your first step into the era of intelligent language technology.

FAQs

1. What exactly is Natural Language Processing?

Natural Language Processing is a specialized branch of artificial intelligence that gives computers the ability to understand, interpret, and manipulate human language. It allows machines to read text, hear speech, analyze its meaning, and determine the sentiment or intent behind it.

2. How is Natural Language Processing different from Machine Learning?

Machine learning is a broader concept where computers learn from data to make predictions or decisions. Natural Language Processing is a specific application that often uses machine learning algorithms specifically focused on analyzing and generating human text and speech.

3. What are some common examples of Natural Language Processing in everyday life?

You encounter this technology daily. Common examples include spam filters in your email, predictive text and autocorrect on your smartphone, voice-activated assistants like Siri or Alexa, and the translation features used in Google Translate.

4. What programming languages are best for Natural Language Processing?

Python is overwhelmingly the most popular programming language for this field. It boasts a massive ecosystem of libraries and frameworks such as NLTK, spaCy, and Hugging Face, making it the industry standard for developing intelligent language applications.

5. How does sentiment analysis work?

Sentiment analysis uses Natural Language Processing to determine the emotional tone behind a series of words. The algorithm is trained on large datasets of text labeled with emotions (positive, negative, neutral) and learns to identify words, phrases, and contexts that indicate specific sentiments.

6. Is Natural Language Processing difficult to learn?

While the underlying mathematics and neural network architectures can be highly complex, getting started has never been easier. There are numerous open-source libraries and APIs that abstract the heavy lifting, allowing developers to implement powerful language features with just a few lines of code.

7. Why do Natural Language Processing models sometimes fail to understand context?

Human language is inherently ambiguous, heavily reliant on context, sarcasm, and cultural idioms. Older models analyze words in isolation, missing the broader meaning. However, newer transformer-based models are much better at understanding context because they analyze the relationship between all words in a sentence simultaneously.

8. Can Natural Language Processing handle multiple languages?

Yes. While early models focused primarily on English due to data availability, modern frameworks are highly multilingual. Advanced deep learning models can translate between dozens of languages and even perform cross-lingual tasks, such as training a model in English and deploying it to analyze text in Spanish.

9. What is the role of tokenization in text analysis?

Tokenization is a fundamental preprocessing step in Natural Language Processing. It involves breaking down a larger body of text into smaller, manageable units called tokens. These tokens can be individual words, subwords, or even punctuation marks, which the algorithm then analyzes individually.

10. How can small businesses afford Natural Language Processing technology?

Small businesses do not need to build expensive models from scratch. Cloud service providers offer pre-built Natural Language Processing tools via APIs on a pay-as-you-go basis. This allows small businesses to easily integrate powerful features like sentiment analysis or chatbots into their software at a fraction of the cost.